How to Upload a Selfie to Google Art

In June of 2015, a seemingly innocuous post on Google's research blog detailed new work being washed on a type of artificial intelligence. Fifty-fifty if y'all just skimmed the entry, you could tell that something extraordinary was itinerant: Information technology showed showed bizarre, dreamlike mutations of banal photographs, courtesy of a neural network called DeepDream that learned how to make images by looking at millions of them.

In essence, they were car dreams. "Yeah, androids do dream of electrical sheep," wrote The Guardian. Presently the story had gone viral, providing the residuum of the world an introduction to the burgeoning customs of engineers and artists working on estimator vision and neural networks, on which DeepDream is based. It spawned hundreds of follow-ups and dozens of image generators, and made neural networks a household term.

This weekend the first art exhibit and auction defended to neural networking–curated by Joshua To, a blueprint and UX lead for VR at Google–opened at the San Francisco gallery and arts foundation Gray Area. The idea for the evidence, according to To, evolved direct from the explosion of online interest in the project. "DeepDream had gone viral and everyone's experience was seeing the work on their telephone or laptop screens," he told me over email. "We idea information technology would exist powerful to curate a drove of pieces and so that people can experience the work printed large, loftier quality, and framed professionally with gallery lighting."

Within the gallery, fine art from 10 unlike artists and engineers hang on the walls, chosen to correspond the sheer diversity of ways calculator vision can be used to make art. Each creative person has a unique background, ranging from the VR filmmaker Jessica Brillhart to Josh Nimoy, a computational artist who worked on Tron: Legacy. While it would have been like shooting fish in a barrel to let the bizarre–and quite beautiful–imagery stand lonely, To says that explaining the ideas behind neural networking was absolutely crucial. But if y'all've ever tried to explicate DeepDream out loud (and you lot don't work in AI), you lot know that'south easier said than done.

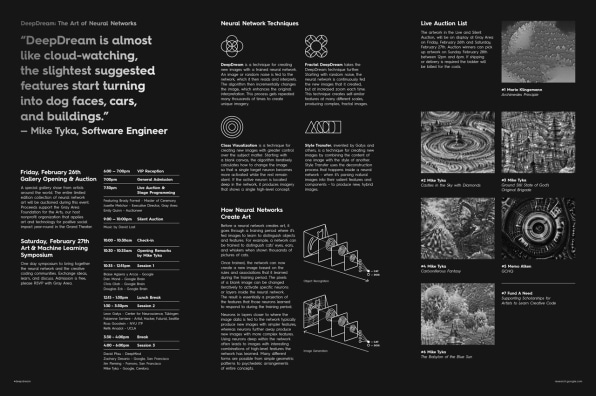

What emerged was a classic problem of data design: How to communicate the remarkably complex and cerebral ideas backside neural networks to a public, without using the relatively esoteric language and ideas native to AI–all in a digestible format that visitors could take with them. Led past Greyness Surface area, the exhibition team came up with a brochure filled with metaphor-rich language explaining the basics ("DeepDream is nigh like cloud-watching," writes featured artist Mike Tyka).

Simply it too included a symbol-based wayfinding system for understanding the tech behind each slice of art. Get-go, information technology lays out 4 different techniques–DeepDream, Class Visualization, Style Transfer, Fractal DeepDream–that were on view in the gallery, in dead-simple terms. And then, it assigns each technique a graphic symbol. Beneath each piece within the gallery, a placard identifies not only the artist and year, simply also an icon that corresponds to the technique the creative person used.

Information technology's a semantic wayfinding organisation designed to assist visitors navigate the esoteric earth of artificial intelligence.

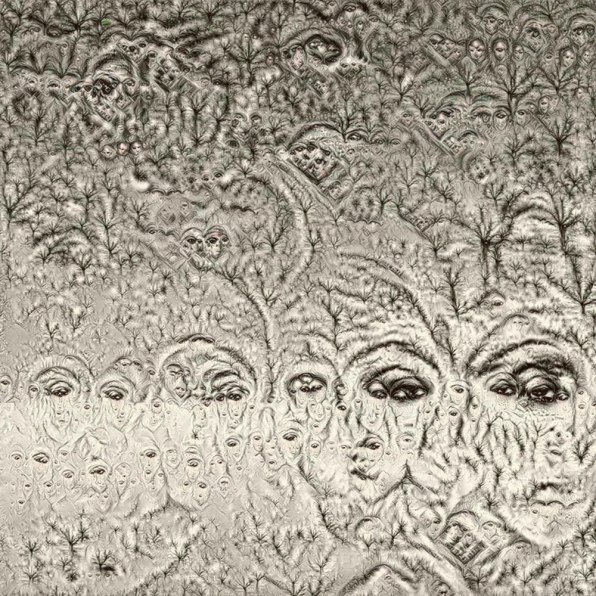

For example, have this slice by the self-described code artist Mario Klingemann, a sepia-toned tangle of eyes and jawlines. In the gallery, the slice is labeled with an overlapping series of concentric rings. Take a expect at the brochure, and you'll see this symbol refers to a straightforward utilize of DeepDream, where a trained neural network is fed an image (presumably here, a woman'south face), which is so incrementally inverse past the algorithm, the curators explicate, creating a feedback loop betwixt the original image and the neural network'due south reading of it. "This process gets repeated many thousands of times to create unique imagery," they write.

According to Gray Area's Josette Melchor, the full procedure of developing and organizing the show, auction, and accompanying symposium with Google took a full six months. "Information technology was incredibly important to us that people who attended the event could go out having a solid agreement of neural networks and DeepDream," To says. "Nosotros put in a lot of work to attain this goal." The sale of the pieces this weekend raised almost $98,000 to do good the gallery's mission of supporting immature artists working with technology through scholarships.

Each of the featured pieces represented a collaboration between a machine and a human. Finding a style to explain that complex and very nascent working relationship to the public isn't just important in the context of the exhibit, information technology's important in the context of advancing artificial intelligence in general.

virginsquithrilve.blogspot.com

Source: https://www.fastcompany.com/3057368/inside-googles-first-deepdream-art-show

0 Response to "How to Upload a Selfie to Google Art"

Post a Comment